-

Feed de notícias

- EXPLORAR

-

Páginas

-

Grupos

-

Eventos

-

Blogs

-

Marketplace

-

Fóruns

Forward Propagation and Backpropagation Simplified

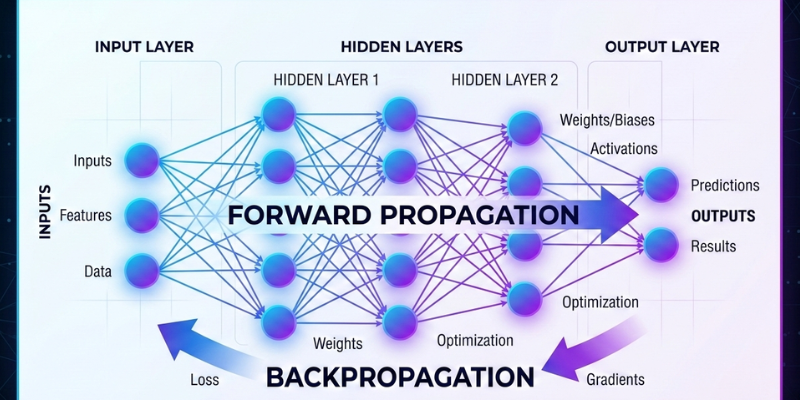

Artificial neural networks draw inspiration from the methods by which the human brain handles information. They are made up of several layers of interconnected nodes that transmit information ahead, modify internal settings, and progressively enhance performance through the learning process. Two core processes make this learning possible, forward propagation and backpropagation. Understanding these mechanisms is essential for anyone who wants to build a strong foundation in deep learning and neural networks.

Forward propagation is the process where input data moves through the network to produce an output. Backpropagation is the technique employed to modify the weights of the network according to the errors in its predictions. Together, they form the backbone of modern deep learning systems used in image recognition, natural language processing, and predictive analytics. If you are planning to strengthen your practical knowledge and apply these concepts in real projects, you can consider enrolling in the Artificial Intelligence Course in Bangalore at FITA Academy to gain structured guidance and hands-on exposure.

What is Forward Propagation

When input data is added to a neural network's input layer, forward propagation begins. Each neuron receives the input, multiplies it by assigned weights, adds a bias term, and then passes the result through an activation function. This activation function introduces non linearity, which allows the model to learn complex patterns.

The result from one layer serves as the input for the subsequent layer. This process continues until the data reaches the final layer, known as the output layer. The final output represents the model’s prediction. For example, in image classification, the output could be the probability that an image belongs to a certain category.

Forward propagation does not involve learning by itself. It simply computes the prediction based on current weights and biases. The accuracy of this prediction depends entirely on how well the model has been trained.

Understanding the Role of Loss Functions

After forward propagation produces a prediction, the next step is to measure how far the prediction is from the actual value. This is done using a loss function. A loss function determines the discrepancy between the predicted result and the actual result.

Typical loss functions encompass mean squared error for tasks involving regression and cross-entropy loss for classification issues. The value produced by the loss function tells us how well or poorly the model is performing. A higher loss indicates a larger error, while a lower loss suggests better performance.

This error value is crucial because it guides the learning process. Without measuring loss, the network would have no direction for improvement.

What is Backpropagation

Backpropagation is the technique used to modify the weights of the network to reduce the loss. It works by calculating how much each weight contributed to the overall error. This is accomplished by applying the chain rule from calculus, which aids in finding the gradient of the loss function concerning each weight.

The gradients show the direction in which the weights need to be modified. The optimization algorithm, usually gradient descent, then updates the weights by moving them slightly in the direction that reduces the loss. This procedure is carried out for numerous training cycles until the model achieves a satisfactory level of accuracy.

Backpropagation makes neural networks powerful because it allows them to learn from mistakes. With every iteration, the model becomes slightly better at making predictions. If you want to practice implementing these concepts and work on industry relevant projects, enrolling in an Artificial Intelligence Course in Hyderabad can help you gain applied skills with expert mentorship.

How Forward and Backpropagation Work Together

Forward propagation and backpropagation are two parts of the same learning cycle. First, forward propagation generates predictions. Then, the loss function measures the error. Finally, backpropagation adjusts the weights to reduce that error.

This cycle continues across multiple epochs during training. Over time, the model’s predictions become more accurate as the weights are fine tuned. The efficiency of this learning process depends on factors such as learning rate, network architecture, and data quality.

Understanding this interaction helps learners grasp why neural networks require both computation and optimization. Without forward propagation, there would be no predictions. Without backpropagation, there would be no improvement.

Forward propagation and backpropagation form the foundation of deep learning. Forward propagation computes predictions by passing data through layers, while backpropagation refines the model by minimizing error through weight updates. Together, they enable neural networks to learn patterns, recognize images, process language, and solve complex problems.

Anyone hoping to pursue a career in machine learning or artificial intelligence must have a firm understanding of these foundational concepts. If you are ready to build strong practical expertise and advance your career, consider signing up for the AI Course in Ahmedabad to gain structured training and hands-on experience in neural network fundamentals.

Also check: How Optimization Shapes Intelligent Systems

- Art

- Causes

- Crafts

- Dance

- Drinks

- Film

- Fitness

- Food

- Jogos

- Gardening

- Health

- Início

- Literature

- Music

- Networking

- Outro

- Party

- Religion

- Shopping

- Sports

- Theater

- Wellness